Your AI is only as trusted as you are.

McKinsey's 2026 State of AI Trust report landed this week with a number that looks like a victory lap. 88% of organizations now use AI in at least one business function. Up from 33% two years ago. Sounds like the question's settled.

It's not.

The Thales Digital Trust Index, published the same month, tells the other side. Only 23% of consumers trust companies to use AI responsibly with their data. 77% say AI doesn't make them trust a company more. And 37% say it actively makes them trust a company less.

Companies are deploying AI faster than their customers are willing to trust it.

So 88% of companies are putting AI to work. But only 23% of consumers believe they're doing it responsibly. That's not a trust gap. It's a credibility canyon.

The gap isn't uniform

Most coverage of these numbers treats AI adoption like a light switch. On or off. Adopted or not.

But it's never that clean. McKinsey's own data shows that only about 6% of organizations qualify as "high performers," the ones actually seeing real profit impact from AI. The other 82% are experimenting, piloting, or checking a box.

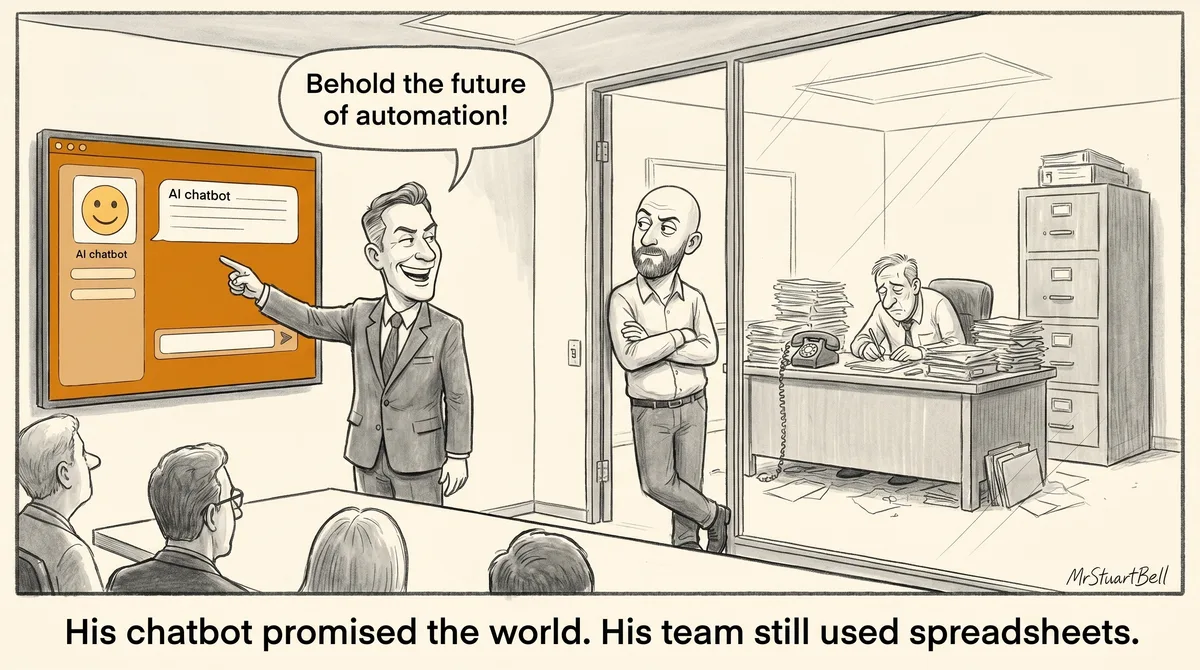

It matters because most companies deploying front-facing AI don't have the behind-the-scenes ability to back it up. They've got the chatbot on the website, but nobody's redesigned the workflow behind it. They've automated the email, but the follow-up still reads like a template. 55% of high performers have fundamentally redesigned their workflows around AI. Only 20% of everyone else has.

Your prospects can feel that gap even if they can't name it.

Your prospects aren't waiting

Your customers are already using AI themselves. They're asking ChatGPT to research you before they pick up the phone. They're getting used to tools that understand context, remember preferences, and give useful answers.

That sets a benchmark. When they hit your AI-generated content that reads like it was written by a committee, or your chatbot that uses a completely different tone to the rest of your messaging, the contrast is obvious.

This is where the trust gap compounds. It's not just that consumers don't trust corporate AI in the abstract. Their personal experience with AI is setting expectations that most companies can't meet. The domain expertise you've spent years building doesn't show up in a generic AI implementation. And when it doesn't show up, trust drops.